Introduction

Social media have become indispensable in modern political campaigning, offering candidates new ways to reach and engage with voters (Jungherr et al., 2020; Vergeer et al., 2013). Where once politicians relied on in-person encounters – speaking from soapboxes in parks or addressing patrons in noisy pubs – they now have access to widely used digital platforms that amplify their messages to a much broader audience (e.g., Kruikemeier, 2014; Jungherr, 2016; Evans et al., 2014; Barberá et al., 2019; Vergeer, 2013). But how do political candidates use social media on the campaign trail? And how does what they post vary according to the ‘type’ of politician they are? These are the questions that we address in this study.

We examine the use of Twitter (now X) during the 2020 Irish General election, a case that offers insights on social media as a campaign tool in systems where candidates face a competitive electoral environment but limited resources. Irish candidates faced significant pressure in elections to distinguish themselves from other candidates in 3–5-seat multi-member districts. This pressure to stand out is compounded by larger parties often running more than one candidate in a given constituency, potentially diluting the party label and splitting the vote (Sudulich & Wall, 2009). Campaign finance rules in Ireland were particularly strict, however, placing a premium on cost-effective communication strategies. With spending caps ranging from €30,150 to €45,200 per candidate (depending on constituency size), candidates must make strategic decisions about how to allocate limited campaign resources. In this context, social media platforms like Twitter offered candidates a low-cost way to differentiate themselves from rivals, both within their own parties and across party lines.

Our study combines extensive hand-coding of text-only and image-based tweets with state-of-the-art machine learning classifiers that rely on transformer-based embedding techniques with transfer learning. This allows us to test our pre-registered hypotheses about whether candidates’ emphasis on policy or electioneering content varies based on their competitiveness, experience, or gender.1 First, we hypothesised that more competitive candidates would prioritise policy content over electioneering. However, our results show little evidence that competitiveness significantly influences the likelihood of posting either type of content. Second, we expected less experienced candidates to focus more on electioneering, while more experienced candidates would emphasise policy. The results partially support this: we observe a small increase in policy-related tweets among more experienced candidates. Interestingly, when accounting for public engagement (e.g., retweets), we also find – contrary to our expectations – that experienced candidates are more likely to post electioneering content as well. Third, we developed competing hypotheses about how gender might influence campaign messaging. Here, our findings are clear: male and female candidates post similar content, suggesting that gender does not significantly shape the type of campaign messages shared on Twitter.

Our results contribute to our understanding of the role of social media in modern election campaigns and how different politicians use the platform to pursue their electoral purposes. The findings also contribute to an ongoing methodological debate on how to classify – at scale – social media content in politically salient categories.

Social Media as a Campaign Tool

Politicians have multiple channels available to them to communicate with potential voters, for example, through parliamentary speeches (Proksch & Slapin, 2015), parliamentary questions (Sorace, 2018; Müller & Ncib, 2024), appearances in traditional media (Park & Suiter, 2021), constituency work, door-to-door campaigning (Marsh, 2004), and also social media. Social media are a particularly cost-effective tool politicians use to communicate their competences and what they stand for. First, messages that politicians post on social media are frequently picked up in traditional media outlets (Gilardi et al., 2022). According to Jungherr (2014, 2), “tweets become public record and are increasingly incorporated into traditional journalistic coverage of political events.” A recent study even suggests that Twitter visibility improves candidates’ electoral performance (Kartsounidou et al., 2023). Second, social media allow candidates to bypass traditional media outlets altogether and directly communicate with their audience (Theocharis et al., 2016). Social media are a cheap and effective instrument for politicians to shape their public persona, and to communicate with other politicians, journalists, and voters who are present on these networks.

Having an active presence on social media is an increasingly important tool for political candidates that they can put to work for different campaign purposes. They may use their account to attack their opponents (Gross & Johnson, 2016; Ceron & d’Adda, 2016); test the popularity of their ideas (Jungherr et al., 2020); directly communicate with their followers (Theocharis et al., 2016); or capture the attention of traditional mass media outlets (Gilardi et al., 2022). While these activities all play a role in modern campaigns, they are secondary to two core objectives shared by all candidates seeking the vote: ensuring that potential voters recognise their connection to the constituency and know what policies they stand for. In our analysis, we examine how candidates – or their campaign teams (Bauer et al., 2023) – use Twitter in support of these two core objectives by identifying the extent to which politicians highlight their policy positions or emphasise their campaign activities in their constituency. Policy positions can be communicated in tweets that explicitly signal a politician’s views on a policy or show the politician claiming credit for a policy success. Campaign activities, on the other hand, are evident in politicians’ use of ‘electioneering tweets’, which, for example, announce campaign events, election materials, or show the politician or their supporters canvassing to get out the vote (Huwyler et al., 2025). In what follows, we develop our pre-registered expectations about how electoral candidates with different degrees of competitiveness and experience and who vary in their gender choose to prioritise policy content and campaign activities on social media.

Theoretical Expectations

Campaign politics is seemingly simple: voters prefer recognisable candidates who implement policies they support (Schumacher & Elmelund-Præstekær, 2018). As noted by Mondak (1995, 1045), voters want “representatives whom we can trust, and we want representatives who can get the job done.” Candidates, in turn, want to communicate to voters what policies they stand for and their connection to the constituency (Bowler et al., 2020). Yet this apparent simplicity masks a more complex reality; how candidates pursue these goals often depends on who they are and where they stand in the campaign cycle (Silva et al., 2024). A competitive candidate may see little benefit in further emphasising constituent ties. An experienced politician might focus more on policy, believing name recognition is already secure. And gender may also play a role, with female candidates potentially placing different emphasis on policy versus electioneering. In what follows, we discuss the theoretical underpinnings for each of our three pre-registered expectations.

Competitiveness

A candidate’s level of competitiveness can shape how they communicate online, as part of broader strategic decisions about where to allocate limited campaign resources. Research on electoral competition suggests that candidates with varying chances of success will allocate their limited campaign resources differently to maximise expected returns (Cox, 1999; Jacobson, 2015). Less competitive candidates in candidate-focused electoral systems such as Ireland (Bowler & Farrell, 2011; Sudulich & Trumm, 2019) face pressure to boost their name recognition and to communicate their value to constituents. They must overcome information asymmetries and low baseline name recognition that disadvantage them relative to more competitive candidates (Zaller, 1992). Less competitive candidates that lack name recognition face higher costs in convincing voters of their viability, especially since voters often rely on cognitive shortcuts and prior expectations when evaluating candidates (Lupia & McCubbins, 1998). In the Irish context, door-to-door canvassing, election posters, and distributing election flyers are traditional ways for a candidate to meet such costs (Marsh, 2004), but social media has provided a new and widely-adopted set of opportunities to appeal to voters (Sudulich & Wall, 2009). As a result, we expect that less competitive candidates will be more likely to resort to electioneering tweets in order to signal that they are running an active campaign and engaging directly with voters in their constituency. In contrast, competitive candidates enjoy higher baseline name recognition and credibility, reducing their need for visibility-building activities (Jacobson, 2015). These candidates can instead focus on demonstrating policy competence, a strategy that can improve their chances of success by highlighting substantive policy achievements rather than campaign effort. They can rely on some pre-existing level of name recognition and adapt their communications strategy to signal their value as policymakers:

H 1 (Competitiveness): More competitive candidates are less likely to send out electioneering tweets and more likely to send out policy tweets.

Experience

Our next expectation concerns a candidate’s political experience. Some candidates enter the campaign trail for the first time, whereas others have experience from previous elections. Experienced candidates have had opportunities to build policy portfolios through legislative achievements, constituency service, or ministerial roles (see e.g., Baturo & Elkink, 2022). These accomplishments represent valuable political assets that can be strategically deployed during campaigns (Fenno, 1978). As Mayhew (1974) argues, experienced politicians engage in “credit claiming” for past policy successes, which serves both to demonstrate competence and differentiate themselves from inexperienced challengers. Experienced candidates will also likely enjoy at least some face recognition in their local constituency due to past campaign efforts or their “media capital” (Davis, 2010).

In contrast, inexperienced candidates lack established policy records and must instead rely on campaign activity to signal their viability and commitment to voters. New candidates must invest more heavily in relationship-building and visibility-enhancing activities to compensate for their deficit in established social capital, networks and recognition (Coleman, 1988). Electioneering content helps serve this function by demonstrating active campaigning and grassroots mobilisation.

The “electoral experience effect” documented in campaign finance research further supports this reasoning: experienced candidates are more effective at converting resources into votes precisely because they can rely on established reputations rather than having to build recognition from scratch (Jacobson, 2015). This advantage allows experienced candidates to focus on policy communication, while inexperienced candidates must prioritise visibility-building activities, and so we hypothesize that:

H 2 (Experience): More experienced candidates are more likely to send out policy tweets and less likely to send out electioneering tweets than less experienced candidates.

Gender

Our final hypothesis concerns the gender of the candidates running for office. Gender shapes political communication strategies as a result of structural inequalities and gendered expectations in electoral politics (Dolan, 2014; Lawless & Fox, 2010). Female candidates still face an uphill battle in electoral campaigns (Brennan & Buckley, 2017). They tend to be held to higher standards than male candidates (Fulton, 2012) and are associated with stereotypical ‘feminine’ qualities that have little to do with their actual qualities as politicians, the so-called double-bind dilemma (Huddy & Terkildsen, 1993; Boussalis et al., 2021). Female candidates were in a minority in the Irish general elections of 2020: of the 532 candidates running for office, approximately 174 (33%) were women. Female deputies in the Irish Dáil are also in the minority (following the 2020 general election, 36 out of 160 or 22.5% were women). Twitter is a low-cost tool to challenge such stereotypes, allowing women candidates to engage in “strategic attempts” to signal their competence (Wagner et al., 2017), even though some studies suggest that high-status female politicians tend to be targeted with online abuse (Rheault et al., 2019).2

We posit two competing hypotheses relating to gender and social media content. First, with female candidates in the minority in the Irish electoral context, they may need to increase face recognition and engagement to increase their popularity and ability to attract votes relative to male candidates. Female candidates will need to signal that they are ‘electable’ and connected to their constituents, and they can do so by prioritising electioneering tweets relative to male candidates. Second, with evidence showing that female candidates need to be of higher quality than their male counterparts to receive a similar amount of votes (Brennan & Buckley, 2017; Fulton, 2012), this may create an incentive for female candidates to signal their policy competence to counter gendered assumptions about political competence (Schneider et al., 2016). They can do so by prioritising policy tweets, in which they highlight their policy positions or claim credit for policy successes. A recent field experiment in the United States shows that voters send more requests to female than to male legislators, providing evidence that constituents ask female politicians to be more active than male politicians (Butler et al., 2022). Applying these findings to online communication could suggest that female politicians have to signal to voters that they are working hard. This could result in posting more policy-related content and/or more electioneering-related content than their male colleagues.

Given these competing theoretical predictions, we propose competing hypotheses regarding gender effects on campaign communication strategies:

H 3a (Gender): Female candidates are more likely to send out electioneering tweets than male candidates.

H 3b (Gender): Female candidates are more likely to send out policy tweets than male candidates.

Research Design

The workflow used to create our dataset for hypothesis testing is shown in Figure 1. In the first step, we develop a classification scheme for campaign-related tweets. Next, we evaluate the performance of several classifiers in predicting these categories. Finally, we apply the best-performing classifier to examine how candidates use Twitter as a campaign tool, focusing on differences by experience, competitiveness, and gender. Before outlining each step in detail, we first provide an overview of the Irish electoral system to contextualise the campaign environment in which candidates operate.

The Case of Ireland

Irish elections are conducted under proportional representation with a single transferable vote. This highly personalised electoral system is only used in Ireland and Malta in national elections (Carter et al., 2024). Candidates compete in 3–5 seat constituencies and can only be elected by obtaining one of the seats in their district. Party lists do not exist, making local campaigning a requirement for all candidates. Close links to the constituency and a focus on local issues are central features of Irish campaigns (Farrell et al., 2017). At the same time, candidates also need to address national issues to appear as credible and ambitious politicians.

The personalised nature of elections makes Ireland a good test case for our expectations. The mixture between local issues and more general policy stances introduces variation in our dependent variable. Irish candidates vary in competitiveness due to name recognition and a strong incumbency advantage (Jankowski & Müller, 2021). They also differ in terms of experience and gender. In the 2020 election, at least 30% of a party’s candidates had to be female (Keenan & McElroy, 2017). The variation in our key variables makes Ireland a good case to test our pre-registered hypotheses.

The 2020 general election was historic and has been described as ‘the end of an era’ (1, 2021). For the first time since the founding of the state in 1922, the traditionally two largest (centre-right) parties Fianna Fáil and Fine Gael did not win the popular vote. Instead, Sinn Féin, a left-wing populist party, received the most first-preference votes with 24.5%. The election campaign was dominated by housing and healthcare, with over 60% of respondents in the election study citing one of these two issues as most important in shaping their vote choice (Müller & Regan, 2021). Despite ongoing negotiations between Ireland, the United Kingdom and the EU, Brexit did not play a central role during the campaign.

In the 2020 general election, a large proportion of candidates had active Twitter accounts, with 371 out of the 532 candidates (69.7%) tweeting at least once. Figure 2 shows the proportion of candidates for each party with an active Twitter account. We observe substantive differences across parties, but over 80% of the candidates from the main Irish political parties have an active Twitter account. Twitter is a less popular among candidates from the left-wing party Solidarity-PBP, small parties, and independent candidates.

To put these figures in context: in the 2021 German elections, 55% of candidates from the six main parties had an active Twitter account (Sältzer et al., 2021). An analysis of Twitter usage in 2018 across eight European democracies reveals that between 66% (Italy) to 95% (France) of politicians represented in parliament had active Twitter accounts (Silva & Proksch, 2022). Ireland appears to fall somewhere in the middle in terms of Twitter’s use as a political campaigning and communication tool.

Data Collection

In the first step, we identified the candidates running in the 2020 general election and collected the Twitter handles associated with these candidates. We use two sources to identify the Twitter handles of candidates running for office. First, the newspaper TheJournal.ie published an election database3 with information on all candidates. The database includes information on each candidate’s social media accounts. We scraped this information for all candidates. We further refined our list of Twitter handles based on information collected for the Irish Voting Advice Application Which Candidate.4

In the second step, we collected tweets sent by the accounts identified in step 1. Using the rtweet library (Kearney, 2019) we collected all tweets between 1 June 2019 and election day on 8 February 2020. We selected 1 June 2019 as a starting date as it excludes campaign activity for the 2019 European Parliament elections in May 2019. We retrieved the tweets at several points in time to maximise the coverage of tweets, since the Twitter API only allows us to scrape the last 3,200 tweets per account. While started data collection on 19 December 2019, most of our tweets were retrieved in March 2020. The images of these tweets were downloaded in June 2020 after merging and wrangling the Twitter data. In total, we downloaded 119,048 tweets sent by candidates running for office in the 2020 general election, excluding retweets.

Building a Training Set

Next, we create an annotated training set for automatically classifying tweets containing electioneering and/or policy content. To this end, we draw a stratified random sample of 1,550 tweets from the complete set of tweets that are text-only or tweets that contain text plus an image as follows:

We then asked three trained coders to label each tweet separately into the following two categories:

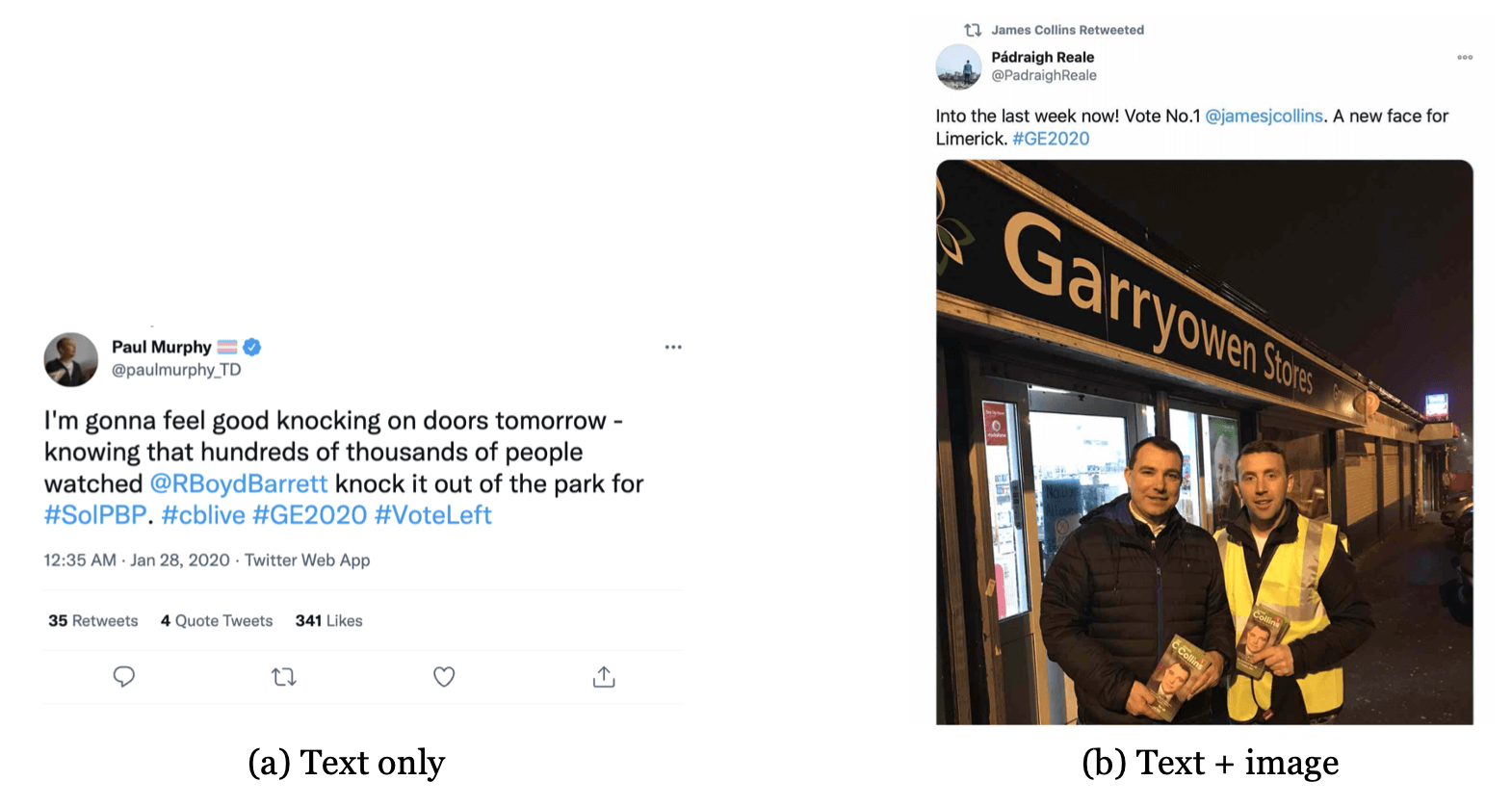

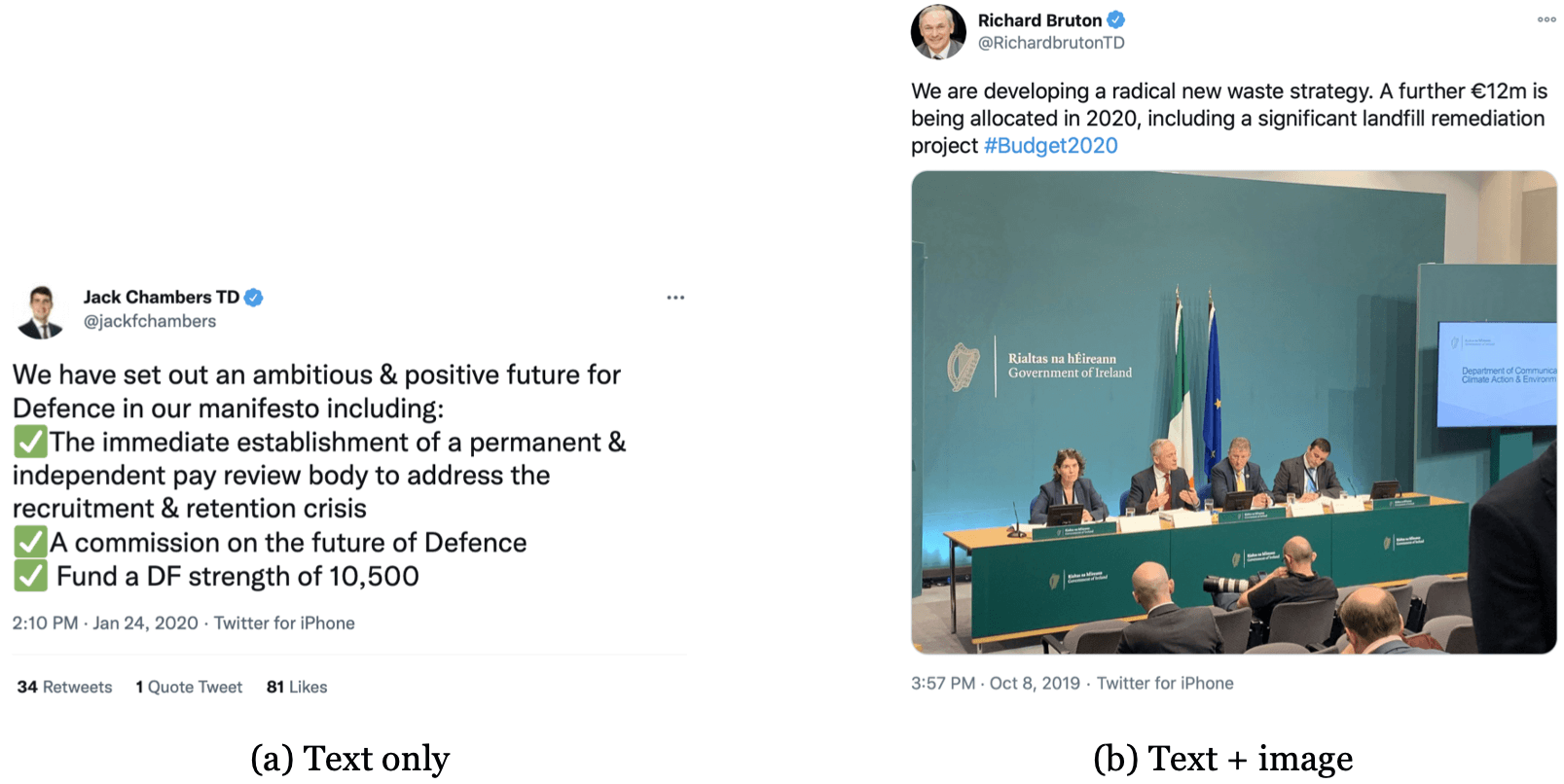

Since both categories were coded separately, each tweet falls into one of four categories: 1) Policy content, 2) Electioneering, 3) Neither, and 4) Both. When a tweet contained image and text, our coders were instructed to consider both when deciding on a categorisation. For text-only tweets, categorisation was based on the text alone. According to our codebook, Electioneering includes tweets relating to canvassing efforts, announcements of (campaign) events, and photographic content about election materials, such as leaflets or posters. Policy content, on the other hand, consists of policy positions, descriptions of policy output or achievements, political competition between politicians, protests, or the promotion of the candidate’s party. Figures 3 and 4 provide typical examples of electioneering and policy content.

We asked the coders to independently consider the full sample of 1,550 tweets. Inter-coder reliability is then assessed using Cohen’s

Classification

In the next step of the workflow, we consider three different classification strategies for predicting the categories of tweets, based on different ways of representing their textual content. In each case we evaluate two distinct binary classification tasks: 1) electioneering vs. non-electioneering content; 2) policy vs. non-policy content. All experiments listed below are applied to the annotated training set discussed in the last section.5

Experiment 1 – Bag-of-words: In our first experiment we consider the text in each tweet and attempt to classify whether or not this text can be used to predict electioneering or policy content. In order to prepare the text for classification, we apply traditional text pre-processing steps to the text of tweets to produce a bag-of-words model (i.e., Twitter-specific tokenization, stopword filtering, TF-IDF term weighting, L2 document length normalisation). We then apply three standard classifiers to the resulting pre-processed bag-of-words representation of the tweets of interest (i.e., k-nearest neighbours, linear support vector machine, logistic regression).

Experiment 2 – Document embeddings: In recent years document embeddings have established themselves as the state-of-the-art in text classification tasks. Document embeddings are trained on much larger corpora of text (English Wikipedia and the Toronto book corpus in this case) and when combined with transfer learning techniques, significantly improve classifier performance (Pan & Yang, 2010). As in Experiment 1, we consider the text in each tweet and attempt to classify whether or not this text can be used to predict electioneering or policy content. We use a distilled version of BERT, DistilBERT (Sanh et al., 2019), which requires less extensive computational resources than the original full BERT model. This allows us to efficiently apply transfer learning, tailoring the base model using text from the training set of tweets. The resulting document embeddings are then combined with the same three classifiers as in Experiment 1.

Experiment 3 – Sentence embeddings: We also investigate the use of recently-proposed sentence transformer methods for generating dense vector representations from short texts, such as sentences or tweets (Reimers & Gurevych, 2020). These methods can make use of existing pre-trained BERT and RoBERTa models to measure the semantic similarity between such short texts. Again, these representations will be combined with the three traditional classifiers from Experiment 1, trained on annotated tweet labels, and used to predict electioneering or policy content.

To perform a comparison of the relative performance of the different classifiers, for each experiment, we will use

To numerically assess performance, we employ a set of five standard evaluation measures: classification accuracy, precision, recall, F1-measure (Manning et al., 2008, 155–156), and balanced accuracy (BAR) (Brodersen et al., 2010). For precision, recall, and F1-measure, we calculate a single score based on the binary average across both classes.

In short, the transformer-based sentence models perform very well across categories. F1 scores range from about 0.76 for policy content to about 0.84 for electioneering. Moreover, misclassification appears unsystematic since precision and recall are similar across classes. Appendix 26 describes the relevant experimental setup and results in detail. Based on the comparative classification performance scores achieved for both tasks, we identified a sentence-based approach using a distilled RoBERTa base model (distilroberta-v1) combined with an SVM classifier as the most effective combination. Note that we use a separate unseen 800 tweet hold-out set to verify this choice (see Appendix 3), where we see comparable levels of classifier performance across all evaluation measures. This includes the BAR and F1 measures, which account for any differences arising from an imbalance between class sizes.

Based on the above experiments, we applied this final combination to the entire set of collected tweets. These tweet-level classifications represent the dependent variables in our subsequent analyses. Figure 5 shows the number of tweets per day that fall into each of the four categories. Three patterns stand out. First, we observe an exponential increase in electioneering tweets after the election was announced in January 2020. Second, overall, candidates post more policy-related tweets than electioneering tweets. Tweets that mention both policy and electioneering are rare, but occur more often shortly before the election. Third, the category that mentions neither policy nor electioneering is the most prevalent class. Figure 5 also speaks to the validity of the policy and electioneering categories. We observe a stark jump in electioneering tweets after the general election was announced at the end of January 2020. The number of electioneering tweets also increased before the by-elections in November 2019. The number of policy tweets spiked on 8 October 2019. On this day, Finance Minister Paschal Donohoe delivered the so-called ‘Brexit budget’. The budget included funds of over €1 billion to tackle a potential no-deal Brexit, increased the carbon taxes and the price of cigarettes, and offered free GP care for children. Candidates belonging to the government supported the budget on social media, while opposition candidates criticised the decisions. Being able to identify these events in Figure 5 speaks to the validity of our classification.

Figure 5:

The number of tweets posted each day across the four relevant categories. Each dot marks one day. Lines are based on local polynomial regressions.

We assess the content and validity of the four categories by conducting a keyness analysis for each category (Zollinger, 2024). The analysis identifies typical words for each category across the entire corpus. Simply speaking, we conduct a

Independent Variables

Competitiveness (Hypothesis 1) is measured using betting odds for a candidate to win a seat in each of the 39 electoral districts (Wall et al., 2012). We retrieved the betting odds from the gambling company Paddy Power on 28 January, eleven days before the election. We merge the betting odds for all candidates with their details on the constituency, party, election result, and Twitter account (if available). We convert the fractions (odds used in the UK and Ireland) to probabilities. We measure the competitiveness for candidate

Figure 6 shows the distribution of this competitiveness score for candidates with and without an active Twitter account. Interestingly, candidates who are not very competitive (lower values) also tend not to have an active Twitter account.7

Experience (Hypothesis 2) is measured with an ordinal variable of the number of previous general elections in which a candidate has participated (Yoshinaka et al., 2010). Relying on data from Müller & Kneafsey (2023) we count how many general elections a candidate has previously competed in.8 Gender (Hypotheses 3a and 3b) is measured based on publicly available information on candidates competing in the 2020 election.

Control Variables

Campaign period is a dummy variable denoting whether a Tweet was sent during the election campaign. On Tuesday, 14 January 2020, the election was called. Tweets that were sent during the campaign period (14 January through Election Day) were coded as 1. Tweets that were sent during the pre-campaign period (1 June 2019 through 13 January 2020) were coded as 0. Time until election is measured using the number of days until election day. Party is measured using a categorical variable denoting the party of the politician. The Irish General elections of 2020 were contested by the following parties: Fine Gael, Fianna Fáil, Sinn Féin, Labour Party, Social Democrats, Green Party, Solidarity–People Before Profit, Aontú, and Independents, and several small parties. We merge Independent candidates and candidates from the remaining small parties into the category Other Parties/Independent.

District magnitude, measured as the number of seats in a constituency (ranging from 3 to 5 seats), while Number of candidates is measured as the number of candidates competing for seats in a given constituency. The Urban-rural divide is captured following the coding by Müller & Regan (2021) which identifies the following constituencies as ‘urban’: Dublin Bay North, Dublin Bay South, Dublin Central, Dublin Fingal, Dublin Mid West, Dublin North West, Dublin Rathdown, Dublin South Central, Dublin South West, Dublin West, Dun Laoghaire, Cork North Central, Cork South Central, and Limerick City. The remaining constituencies are classified as rural.

We also control for experience and embeddedness in the social network. Length of time on Twitter is controlled for as it serves as a proxy for familiarity with the platform. It is measured as the number of days between the election date and the day the candidate opened their Twitter account. Followers denotes the number of followers a candidate had on the date the Twitter data was collected. This is important to control for as it mediates the potential reach of any tweets issued by the candidate.

To account for dynamic variation in behaviour and interactions on Twitter and the interactive nature of communications on the platform, we include a number of controls. Average tweets in last 7 days denotes the rolling average of tweets a candidate sent out in the seven days before a tweet was sent, and aims to capture differences in how much candidates are using Twitter at a particular juncture in the campaign. Retweets and Likes capture the average amount of engagement a candidate gets with their Twitter posts (Boulianne & Larsson, 2021). This is operationalised as the average number of retweets/likes of a candidate’s previous 10 tweets. A further set of alternative specifications included will be the total number of retweets/likes a candidate receives in the previous 7 days.

We also need to control for a candidate’s overall attention to electioneering and/or policy content and audience feedback on this activity. To do so we include variables capturing the Number of electioneering tweets in the last 10 tweets, and the Average number of retweets of the last ten electioneering tweets. We include the same for tweets containing policy content.

Models

Since our dependent variable has four categories with no natural ordering, we employ a multinomial logistic regression. We cluster standard errors at the candidate level. The general model – as specified in our pre-assessment plan9 – is specified as follows:

In this equation

Our dataset covers two distinct periods where one can expect candidate behaviour on Twitter to vary. The first period covers the time between the previous European election in May 2019 and the announcement of the 2020 general election in January 2020. The second period covers the campaign period between the announcement of the election and the vote in February 2020.

Model 1 takes the model structure specified in equation 1 and uses the following set of covariates to examine differences between the campaign period and the pre-campaign period through the inclusion of a series of interaction terms. The model is specified as follows:

Our second model seeks to account for campaign and audience interaction dynamics. To do so we estimate a regression model (Model 2) including the following covariates:

We include a series of further robustness checks in Appendix 4 using the alternative variable specifications discussed above.

Results

We start the discussion of our results with a purely descriptive analysis of electioneering and policy tweets during the 2020 Irish campaign. For each candidate, we aggregate the proportions of tweets that fall into the four categories. Figure 7 shows the distribution of electioneering and policy tweets for different values of the independent variables of interest. Each dot marks one candidate. Starting with competitiveness, we observe that very competitive candidates send more messages about policy than candidates with low competitiveness, who sent slightly more electioneering tweets. For a more straightforward comparison, we separate the continuous competitiveness variable into terciles. The second panel shows that candidates who ran previously post more policy tweets than candidates who have not competed in an Irish general election before. The third panel reveals only small differences in electioneering and policy tweets between male and female candidates.

Next, we turn to the interpretation of our multinomial logistic regression models. Table 2 details the results of our analysis, capturing the relations between the variables of interest. The baseline category contains tweets that have no electioneering or policy content. We exponentiate coefficients in order to obtain relative-risk ratios and plot a selection of these relative-risk ratios in Figure 8 to aid in the interpretation of the substantive size of the relationships detected.

Table 2:

Model 1 - Multinomial logistic regression analysis with clustered standard errors Note: z statistics in parentheses; ∗ p 0.05 p 0.01 p 0.001

!

Hypothesis 1 states that more competitive candidates are less likely to send out electioneering tweets and more likely to send out policy tweets. The results from Model 1 suggest that these relationships do not hold. Competitiveness has no observed effect on tweeting about electioneering or policy. This finding holds across a range of alternative model specifications (see Appendix 4) and suggests that a candidate’s likelihood of winning a seat does not significantly correlate to their online campaign strategy.

Hypothesis 2 holds that more experienced candidates are more likely to send out policy tweets and less likely to send out electioneering tweets than less experienced candidates. We observe a positive relationship between previous campaign experience and tweeting about policy separately. Each additional electoral campaign experienced increases the relative risk of tweeting about policy by 19%. We observe no effect of campaign experience on the other categories of interest (electioneering; both). Again, these results are robust to a series of alternative model specifications as shown in Appendix 4.

Hypotheses 3a and 3b propose that candidates’ tweet content differs by gender. Specifically, we expected that female candidates would display more electioneering activity (H3a) and a stronger policy focus (H3b) compared to their male counterparts. However, our model offers little evidence to support these expectations.10 Overall, the results suggest that male and female candidates do not differ substantially in their use of Twitter for electioneering or policy messaging during the campaign.

Model 1 reveals clear differences between the pre-campaign and campaign periods, indicating that candidates’ online behaviour evolves over time. To investigate how campaign dynamics influence this behaviour more closely, Model 2 (Table 3) introduces controls for the level of effort each candidate devotes to electioneering and policy, as well as the audience engagement these tweets receive, measured by retweets. The aim is to assess whether recent communication patterns - and the feedback they generate - influence a candidate’s strategic decision about what type of content to post (electioneering, policy, or both). As in previous figures, Figure 9 presents the results using relative risk ratios.

Table 3:

Model 2 - Multinomial logit regression analysis with clustered standard errors. Note: z statistics in parentheses; ∗ p 0.05 p 0.01 p 0.001

!

As before, we find little evidence in support of effects for H1 (candidate competitiveness) and H3 (gender), but our results for H2 (campaign experience) now appear significant. The categories electioneering, policy, and both are each found to be positively associated with experience when one controls for campaign dynamics. Each extra campaign experienced by a candidate leads to a relative risk ratio increase of 5% for electioneering, 9% for policy, and 6% for both. This is in line with our expectation about how experience influences policy focus, but contradicts our expectation about electioneering focus, suggesting there is no substitution effect here - more experienced candidates communicate more about both electioneering and policy than their less experienced counterparts and this effect is cumulative.

We also note effects associated with the variables capturing campaign dynamics related to a candidates’ recent focus on electioneering and policy content at any given point in the campaign. Each extra tweet about electioneering in the previous ten increases the likelihood of another electioneering-related tweet by 32%. The effect is similar for previous policy focus (29% increase). Audience feedback only seems to matter for previous electioneering tweets, but the size of the effects in question is substantively very small. It appears that a candidate’s recent past behaviour is a good predictor of current behaviour, but that positive feedback in terms of retweets matters little in encouraging the broadcast of further policy or electioneering content.

Conclusion

Social media have become an indispensable, low-cost tool for political candidates campaigning for office. But we still know little about how precisely politicians make use of such networks in their political campaigns. In this study we addressed this question by analysing how candidates in the 2020 General Elections in Ireland used Twitter to signal their policy credentials or to boost the visibility of their campaign activities, and how these priorities varied across politicians. In doing so, our study offers both methodological and substantive contributions to our understanding of online campaigning.

A methodological challenge in studying online campaigns is classifying social media posts into categories that are both meaningful and politically relevant. Our study offers a reproducible pipeline for doing precisely that, enabling large-scale analysis of online campaign content in a systematic and scalable way. The candidates who ran in the 2020 election posted more than 122,000 tweets between June 2019 and election day in February 2020 (excluding retweets). The workflow we developed to categorise these tweets relied on extensive manual coding in combination with a set of state-of-the-art supervised machine learning approaches and data representations to leverage this hand coding to classify tweets at scale. In a series of experiments, we first demonstrate that a supervised machine-learning approach that relies on transformer-based sentence embeddings and transfer learning can successfully capture differences in how these candidates present themselves online. Our results show that a combination of transformer-based sentence embeddings, task-specific training sets, and an appropriate decision algorithm can reliably identify electioneering and policy content.

Substantively, our analysis shows that the 2020 Irish election campaign on Twitter was policy-focused: over 30% of tweets from candidates included policy content, while fewer than 10% were explicitly about electioneering. Importantly, we also find that candidates do not use Twitter in the same way. Consistent with our expectations, we find that candidates with prior campaign experience tweet significantly more about policy than their less experienced counterparts. We suggest that experienced candidates are more likely to have concrete policy achievements to highlight, and using Twitter to showcase these accomplishments helps them distinguish themselves from newer candidates. Contrary to our expectations, we find that prior campaign experience is also positively associated with electioneering content, at least when previous engagement with such posts is taken into account. This suggests that, as candidates gain experience, policy-related and electioneering content tend to complement rather than substitute for one another. Also unexpected is the lack of any consistent effect of competitiveness or gender on the likelihood of tweeting about electioneering or policy. Less competitive candidates who are active on Twitter appear just as likely to post about electioneering and/or policy content as their more competitive peers. We should note, however, that the least competitive candidates were largely absent from Twitter in the first place (see Figure 6), so our findings apply primarily to those with at least moderate electoral prospects. Finally, we find no consistent gender-based differences in communication behaviour: male and female candidates were equally likely to tweet about both electioneering and policy.

While Ireland offers an instructive test case given its candidate-centred electoral system, multi-member constituencies, and strict campaign spending limits, our findings may not fully generalise to other contexts. In systems where party labels play a more dominant role, or where social media is used differently by candidates or voters, the relationship between political experience and social media strategy may take a different form. Future research should explore whether similar patterns hold in more party-centred systems, or in countries where the incentives for individual candidate branding are less pronounced. The pipeline we propose for categorising campaign tweets lends itself to comparative, cross-country analysis. This opens up exciting possibilities for examining the generalisability of our findings to other electoral systems and campaigns.

Our study also raises several questions for future research. Future studies could, for example, tease out the drivers of the link between political experience and online campaign strategies that our analysis has uncovered. While we argue that policy credentials play a central role, alternative explanations (such as differences in campaign resources, staff support, or digital literacy) merit further exploration.

In addition, future work could test whether similar patterns emerge on other social media platforms. We focus on Twitter because it was the most widely used social media platform among candidates during the 2020 election, and because tweets were frequently cited in traditional media coverage. Since then, however, the social media landscape has shifted towards more multimodal platforms, such as Instagram and TikTok. Applying recent advances in multimodal classification could help determine whether our findings hold across these newer, visually oriented platforms.

Online Appendix and Data Availability Statement

The online appendices and materials required to verify the computational reproducibility of the results, procedures, and analyses in this article are available on Harvard Dataverse, at: https://doi.org/10.7910/DVN/YIRC0U. Due to Twitter’s/X’s data-sharing policies, the texts of tweets cannot be shared publicly.